Your team built an AI agent. It works in a demo. Now someone asks: where does it actually run? Who monitors it? What happens when it breaks at 2 a.m.? How do you deploy 50 of them across three departments without losing track of what each one can access?

This is where most agent projects stall. According to Gartner, while most enterprises are experimenting with agentic AI, fewer than one in 10 have agents running in full production. The bottleneck is not building the agent. It is deploying, managing, and governing it at scale.

A new category of product has emerged to solve this: agent platforms. Think of them as fleet management software for AI agents. You have built the vehicles. Now you need a system to dispatch them, track them, maintain them, and make sure none of them drive off a cliff.

This post compares the major agent platforms available today, what they do well, where they fall short, and how to choose the right one for your organization.

Three Things That Get Confused

Before comparing platforms, it helps to name the three categories that keep getting lumped together. They solve different problems, and confusing them leads to bad purchasing decisions.

Frameworks are libraries for building agents. LangChain, CrewAI, the OpenAI Agents SDK, and Google's ADK all fall here. They give you the primitives: tool calling, memory management, planning loops, orchestration. You write the code, you host the result. Think of these as the engine and chassis. You write the code, you host the result. Think of these as the engine and chassis. We covered frameworks in detail in a previous post.

Proprietary agents are finished products that run on your computer and do work for you. Anthropic's Claude Cowork accesses your local files and automates tasks from your desktop. GitHub Copilot Workspace writes code in your browser. Devin operates as an autonomous software engineer. These are company cars with a driver included. You use them. You do not manage a fleet of them.

Agent platforms are infrastructure for deploying, running, monitoring, and governing agents at scale. This is the fleet management layer. You bring your own agents (or build them on the platform), and the platform handles execution environments, permissions, observability, and lifecycle management. This is the category we are comparing in this post.

What Agent Platforms Actually Do

Regardless of vendor, agent platforms converge on a similar set of capabilities. Understanding these capabilities is more useful than memorizing product names, because the names will change faster than the underlying needs.

Execution environments. Somewhere for your agent to run that is not a developer's laptop. This means sandboxed containers, cloud runtimes, or managed infrastructure where agents can execute code, call APIs, and interact with tools without affecting production systems accidentally.

Identity and access management. Each agent needs its own identity with scoped permissions, just like a human employee. What systems can it access? What data can it read? What actions can it take? Without this, every agent is a security incident waiting to happen.

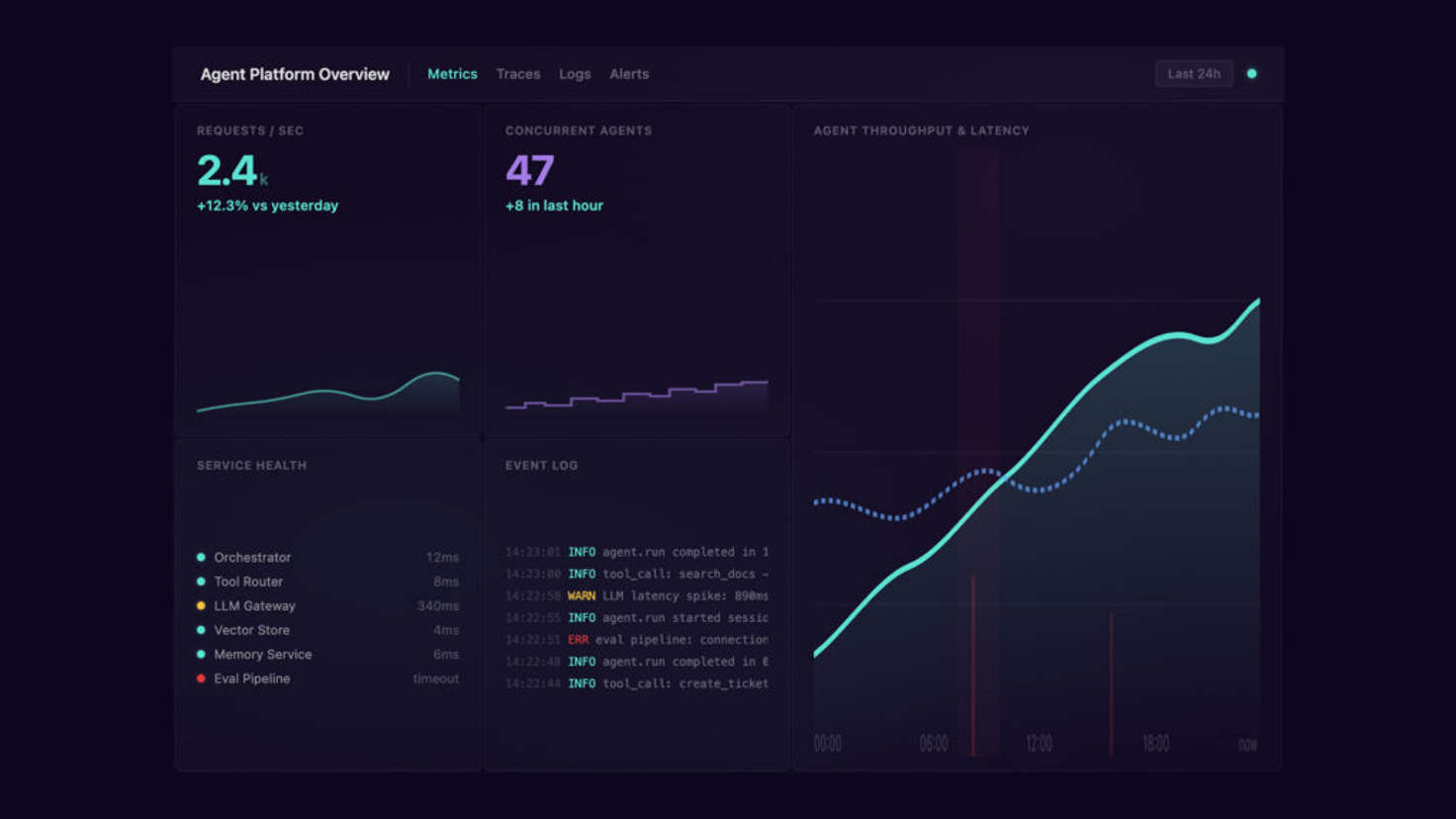

Observability and audit trails. You need to see what your agents are doing, why they made specific decisions, and what went wrong when something fails. Logs, traces, success rates, latency, and cost tracking all fall here.

Business context and integrations. Agents that cannot access your CRM, data warehouse, ticketing system, or internal documentation are agents that cannot do real work. Platforms provide connectors and a semantic layer so agents can pull from the same information your employees use.

Evaluation and improvement. Built-in tools for measuring agent performance, running evaluations, and feeding results back into the system so agents get better over time.

The Platforms

OpenAI Frontier

Frontier launched in February 2026 and represents OpenAI's bid to become the operating system of the enterprise. It is the most ambitious platform on this list in terms of scope.

What it does well. Frontier creates a shared business context layer that connects enterprise systems (CRM tools, data warehouses, internal applications) so agents can access the same information employees do. Every agent gets its own identity with explicit permissions and boundaries. The platform includes built-in evaluation and optimization loops. Critically, it is vendor-neutral on the agent side: Frontier works with OpenAI's own agents, agents you build yourself, and agents from third parties including Google, Microsoft, and Anthropic.

Where it falls short. Frontier is available only to a small group of enterprise customers (HP, Intuit, Oracle, State Farm, Uber, and a few others at launch). There is no public pricing. The vendor-neutrality claim is strong on paper, but the platform is new and the depth of third-party agent support remains to be seen. The "AI coworker" framing, complete with onboarding and feedback loops modeled on HR processes, is either visionary or premature depending on your perspective.

Best fit. Large enterprises that need to coordinate agents across multiple departments and business systems. Organizations that want a single control plane regardless of which models or frameworks their agents use.

Warp Oz

Oz launched in February 2026 as a cloud orchestration platform specifically for coding agents. While Frontier aims to be an enterprise-wide operating system, Oz is laser-focused on engineering teams.

What it does well. Oz lets you run hundreds of coding agents in parallel in secure, sandboxed Docker environments. Every agent run generates a shareable link and audit trail accessible via CLI, web, or mobile. Agents can make multi-repo changes, push commits, open pull requests, and run tests autonomously. The "Skills" system provides reusable instruction sets, and agents can be scheduled like cron jobs or triggered by webhooks. Oz supports multiple models (Claude, Codex, Gemini) and offers both cloud-hosted and self-hosted deployment.

Where it falls short. Oz is built for coding workflows. If your agents need to process customer support tickets, run financial analyses, or operate across non-engineering business systems, this is not the right platform. It is also relatively new and still establishing its enterprise governance features compared to the cloud incumbents.

Best fit. Engineering teams that have adopted AI coding agents (Claude Code, Codex, Gemini) and need to scale them beyond individual laptops. Organizations where the primary agent use case is automating development workflows: code review, documentation, bug triage, security scanning, and cross-repo refactoring.

AWS Bedrock Agents

Amazon Bedrock Agents is the most mature managed agent service from a cloud hyperscaler. If your infrastructure already lives on AWS, this is the path of least resistance.

What it does well. Model flexibility is the headline feature. You can build agents using Claude, Llama, Mistral, Cohere, or Amazon's own models, and switch between them without rewriting your agent logic. Integration with the AWS ecosystem is deep: Lambda for custom actions, S3 for storage, Knowledge Bases for RAG, CloudWatch for monitoring. Bedrock Agents is fully managed, meaning you do not provision or maintain the underlying infrastructure. Pay-per-use pricing scales cleanly.

Where it falls short. You are on AWS. That is both the strength and the limitation. If your organization runs a multi-cloud strategy or wants to avoid cloud lock-in, Bedrock Agents ties you to the AWS ecosystem. The developer experience is more complex than lighter-weight options, and the agent capabilities are less opinionated than Frontier's "business context" approach, meaning you do more of the integration work yourself.

Best fit. Organizations already invested in AWS that want a managed, model-agnostic agent runtime with enterprise-grade security and compliance. Teams that need fine-grained control over model selection and want to avoid single-model vendor lock-in.

Azure AI Agent Service

Azure AI Agent Service is Microsoft's managed agent runtime, and its value proposition is inseparable from the Microsoft ecosystem.

What it does well. If your organization runs on Microsoft 365, Teams, SharePoint, and Dynamics, Azure AI Agent Service connects agents to those systems with minimal friction. Deep integration with Semantic Kernel (Microsoft's open-source agent framework) and Copilot Studio means you can build agents visually or in code. The recent addition of Anthropic's Claude models to Microsoft Foundry gives Azure customers access to both OpenAI and Anthropic models on the same platform.

Where it falls short. The same lock-in concern as Bedrock, but on the Microsoft side. The agent capabilities are tightly coupled with Azure services, and while Semantic Kernel is open source, the managed platform experience is Azure-only. Organizations that do not use Microsoft 365 extensively will get less value from the integration story.

Best fit. Microsoft shops. Organizations where employees live in Teams, Outlook, and SharePoint, and where agents need to operate inside those workflows natively.

Google Vertex AI Agent Builder

Vertex AI Agent Builder is Google Cloud's entry, tightly integrated with the Gemini model family and GCP services.

What it does well. Strong integration with BigQuery, Cloud Functions, and Google Workspace. The Agent Development Kit (ADK) is open source and pairs naturally with the managed platform. Google's enterprise search capabilities give agents access to structured and unstructured data across your organization. Gemini 2.5 models provide strong multimodal capabilities out of the box.

Where it falls short. GCP has the smallest enterprise market share of the three major clouds, which means fewer organizations have existing infrastructure to build on. The agent tooling, while powerful, is optimized for Gemini models, and third-party model support is less mature than Bedrock's. Google's enterprise product track record (frequent rebranding and deprecations) gives some buyers pause.

Best fit. Organizations already on GCP, particularly those using BigQuery for analytics. Teams that want to build on Gemini's multimodal strengths (vision, audio, long context) as a core part of their agent capabilities.

Salesforce Agentforce

Agentforce is the most vertically focused platform on this list. It is not a general-purpose agent runtime. It is an agent layer built into Salesforce.

What it does well. If your agents need to operate inside sales and customer service workflows, Agentforce has an unfair advantage: it already has access to your customer data, pipeline, case history, and business rules. No integration project required. Agents can automate lead qualification, case routing, customer follow-ups, and reporting without leaving the Salesforce ecosystem. For organizations that measure success in pipeline velocity and case resolution time, the time-to-value is faster than any general-purpose platform.

Where it falls short. Agentforce is Salesforce. If your agent use cases extend beyond CRM and customer service (and they will), you need a second platform. The pricing follows Salesforce's per-conversation model, which can get expensive at scale. And if you ever want to move off Salesforce, your agent investment moves with it.

Best fit. Sales and customer service organizations already deep in the Salesforce ecosystem. Teams where the first agent use cases are lead qualification, case triage, or customer communication automation.

LangGraph Platform

LangGraph Platform is the hosted deployment option from the LangChain team. It bridges the gap between the open-source LangGraph framework and a managed production environment.

What it does well. If you are already building agents with LangChain or LangGraph (and with 47 million-plus PyPI downloads, many teams are), the Platform provides a natural path to production. It includes LangSmith for tracing, evaluation, and monitoring. Cloud deployment is straightforward, and the framework itself is model-agnostic, so you are not locked into any single LLM provider. The self-hosted option gives you full control.

Where it falls short. LangGraph Platform is an infrastructure play, not a business context play. It does not provide the CRM connectors, data warehouse integrations, or agent identity management that enterprise platforms like Frontier or Bedrock offer. You get deployment and observability, but you build the business integration layer yourself.

Best fit. Developer teams that have built agents on LangChain or LangGraph and need a production hosting environment with monitoring. Organizations that want framework-level flexibility with a managed deployment option.

CrewAI Enterprise

CrewAI Enterprise is the hosted tier of CrewAI, the fastest-growing framework for multi-agent collaboration.

What it does well. CrewAI's role-based architecture (assigning agents specific roles, goals, and expertise within a "crew") translates naturally to business workflows. The Enterprise tier adds managed deployment, monitoring, and team management on top of the open-source framework. For teams that think about agents as specialized collaborators rather than individual tools, the mental model is intuitive.

Where it falls short. CrewAI Enterprise is newer and less battle-tested than the cloud hyperscaler offerings. The ecosystem is smaller, the enterprise governance features are still maturing, and organizations with strict compliance requirements may find the platform lacking compared to Bedrock or Azure. Like LangGraph Platform, it is primarily an infrastructure and orchestration layer, not a business context layer.

Best fit. Teams that have adopted the CrewAI framework and want a managed path to production. Organizations building multi-agent systems where role-based collaboration is central to the design.

Dify (Open Source)

Dify is the open-source option on this list. With over 130,000 GitHub stars and an Apache 2.0 license, it is a full agent platform you can self-host on your own infrastructure.

What it does well. Dify provides a visual workflow builder for designing agents and multi-step AI workflows without writing code, alongside a complete backend for running them in production. It supports hundreds of LLMs out of the box, including OpenAI, Anthropic, Mistral, and self-hosted models via Ollama or vLLM, so you can route simple queries to a local model and complex reasoning to a frontier model within the same workflow. Built-in RAG pipelines, 50-plus pre-built tools, prompt management, and LLMOps monitoring round out the feature set. The self-hosted deployment means your data never leaves your infrastructure, which matters for teams with strict data sovereignty or compliance requirements. Dify also offers a hosted cloud tier for teams that want the capabilities without the operations overhead.

Where it falls short. Self-hosting means you own the infrastructure: upgrades, scaling, backups, and security patches are your responsibility. The enterprise governance features (agent identity, fine-grained permissions, audit trails) are less mature than what the cloud hyperscalers or Frontier provide. And while the visual builder is accessible, teams building highly custom agent architectures may find it more constrained than a pure-code framework. The community is large and active, but support is community-driven unless you are on an enterprise plan.

Best fit. Teams that need full control over their agent infrastructure and data. Organizations with data sovereignty requirements that rule out sending data to third-party platforms. Budget-conscious teams that want a production-ready platform without per-seat or per-query SaaS pricing. Dify is also a strong choice for teams that want to experiment with multiple models and workflows before committing to a cloud vendor.

How to Choose: Enterprise vs. Small Team

The right platform depends less on which one has the best feature list and more on where your organization sits today.

Enterprise Organizations

If you are deploying agents across multiple departments, connecting them to systems of record, and need SOC 2, HIPAA, or data residency compliance, your realistic options are Frontier, Bedrock Agents, Azure AI Agent Service, or Vertex AI Agent Builder. The decision usually comes down to where your existing infrastructure lives.

Already on AWS? Bedrock Agents is the straightforward choice. Microsoft shop? Azure AI Agent Service. On GCP? Vertex AI Agent Builder. Want a vendor-neutral control plane that sits above your existing cloud? That is the promise Frontier is making, though it is too early to validate.

Salesforce-heavy organizations should evaluate Agentforce for CRM-specific agent use cases alongside a general-purpose platform for everything else.

The key enterprise concerns are agent identity and access management (who can this agent impersonate, what can it touch?), audit trails and compliance (can you prove to a regulator what your agent did and why?), multi-model support (can you swap models without rewriting agents as the market shifts?), and integration depth with your existing business systems.

Small and Mid-Size Teams

If you are a team of five to 50 engineers deploying your first production agents, the hyperscaler platforms may be overkill. You need speed to deploy, cost predictability, and minimal operations overhead.

For coding-focused agent workflows, Warp Oz gives you cloud orchestration without building your own infrastructure. For general-purpose agents, LangGraph Platform or CrewAI Enterprise provide managed hosting that grows with your needs. Both let you start with the open-source framework and graduate to the hosted tier when you are ready for production. If you want to own the entire stack and avoid SaaS pricing entirely, Dify gives you a production-ready platform you can self-host with Docker Compose in an afternoon.

The key small-team concerns are time to first deployed agent (how quickly can you go from working prototype to production?), cost at your current scale (not at Fortune 500 scale), model flexibility (can you experiment with different models without re-platforming?), and how much operational complexity you are willing to take on.

What to Watch

This market is weeks old in some cases. A few trends worth tracking as it matures.

Convergence on MCP. Anthropic's Model Context Protocol is emerging as a standard for how agents connect to external tools and data sources. Platforms that adopt MCP will benefit from a growing ecosystem of pre-built integrations, similar to how REST APIs standardized web service communication. This could reduce the switching costs between platforms over time.

The vendor-neutral vs. ecosystem play. Frontier and the framework-based platforms (LangGraph, CrewAI) are betting on vendor neutrality. The cloud hyperscalers are betting on ecosystem depth. Both have strong arguments. The question is whether enterprises will prioritize flexibility or integration, and the answer is probably different for every organization.

Agent identity as a real discipline. Right now, most platforms treat agent identity as a feature checkbox. As organizations deploy dozens or hundreds of agents with access to sensitive systems, agent IAM will become as critical (and as complex) as human IAM. The platforms that get this right early will have a significant advantage.

The line between platform and product will blur. Frontier already works with third-party agents. Cowork already has enterprise plugins. It is easy to imagine a future where Cowork-like proprietary agents run on Frontier-like platforms, and the categories we defined at the top of this post collapse into a single integrated stack. We are not there yet, but the trajectories point in that direction.

Choosing An Agent Platform

The fleet management analogy holds up. You would not buy a fleet of delivery trucks without first knowing your routes, your cargo, your compliance requirements, and your budget. The same applies to agent platforms. Start with the use case, not the vendor. Map the agents you plan to deploy, the systems they need to access, and the governance requirements they need to meet. Then evaluate platforms against those specific needs.

And design for portability. The agent platform market in February 2026 will look different from the agent platform market in February 2027. The organizations that build clean abstractions between their agent logic and their platform infrastructure will be the ones that can adapt without starting over.

We have been helping clients navigate exactly this decision: evaluating agent platforms, building agents on top of them, and integrating them with existing business systems. If you are moving from agent prototypes to production deployment and need a team that understands both the AI architecture and the enterprise integration work, we would like to hear about your project.